Two weeks of fine-tuning VLAs in sim: what worked, what broke

I spent a couple of weeks fine-tuning vision-language-action policies to pick colored books off a shelf in MuJoCo. One 5070 Ti, no cloud. I went ACT → SmolVLA → harder scene. The SmolVLA fine-tune on the simple scene peaked at 60% and produced the only clip I’m proud of. Picking all four colored books back-to-back in one scene, handling rack states it never saw at training. Then I changed one thing about the test (shuffled where the books sat on the shelf) and the same model dropped to 17.5%. A clean replay-the-eval-RNG breakdown showed it was 14/26 when the target color happened to land in its canonical slot and 0/54 when it didn’t. The model had never used vision to find the color. It memorized which slot was which. The literature-recommended mitigation (counterfactual augmentation) made it strictly worse on both conditions. The harder scene I tried after that went brain-dead completely (0/10). A replay diagnostic confirmed the model couldn’t reproduce its own training data. Two weeks, three architectures, four scenes, zero clean wins. Here’s the full log.

The setup

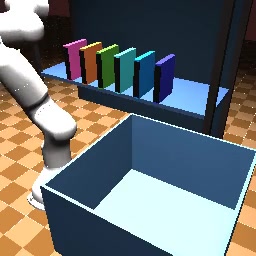

The simple scene I used for most of this is small: MuJoCo Franka Panda, a single-shelf rack with 4 colored books (red, green, blue, yellow at fixed y-positions), an open carton in front. A hand-tuned damped-least-squares IK pick-and-place state machine generates demonstrations at 20 Hz with two cameras (base + wrist) at 224×224 RGB. Per-episode randomization on book positions (±5 mm), carton (±20 mm), base camera (±30 mm xyz), light intensity (±20%), and 12 instruction templates like “pick the red book and place it in the box”.

I wanted a single policy that maps (image, prompt) to joint targets.

Phase 1: ACT plateaus at 37.5%

I started with ACT (Action Chunking Transformer, ~52M params). ACT has no language pathway, so a single ACT on all 4 colors should fail by construction. It did. 0/40. The model picks something, just not the correct color.

The standard workaround is per-color specialists: train four separate ACT models, parse the color from the prompt at eval, dispatch to the right one. This worked, sort of:

| ACT setup | demos / color | success |

|---|---|---|

| ACT v1 specialists | 50 | 37.5% |

| ACT v2 specialists | 140 | 37.5% |

Doubling the demos per specialist bought me nothing. ACT has hit its capacity ceiling on this task, and “ceiling” turned out not to be data-bound. Reasonable result, well-known story.

The takeaway from phase 1 wasn’t “ACT is bad.” It was “I want one model that reads the prompt instead of four routed by string-matching.”

Phase 2: SmolVLA gets language to work

I swapped in SmolVLA-450M (LoRA on the action expert + LM, vision encoder frozen, the standard recipe, more on that later). The training set was the same 524-episode dataset I’d been using for ACT, just with the per-episode instruction string passed through.

First run, 10K steps, batch 4, ~0.42 epochs: 25%. Worse than ACT. The dataset was barely getting touched.

Second run, 50K steps (~2 epochs from the same 10K checkpoint): 50%. Better than the ACT plateau by 12.5 percentage points, and on harder eval distributions (12 prompt templates, 8 of which weren’t in the training set) it still scored 40%.

The language head was real, not just memorized-template lookup. Eval successes included unseen phrasings:

- “transfer the red book to the carton”: 4/13 (training had only

pick X and place it in the boxstyle templates) - “deposit the blue book into the box”: 3/8

- “store the green book in the box”: 4/6

The one clean failure mode was “the X book goes in the box”. 0/8 across all colors. The non-imperative grammar (book as syntactic subject) broke the language pathway entirely. I noted that as a known issue and moved on.

One more thing about this checkpoint. It could only pick one book at a time. Asked to pick all four colors in a row in the same scene, starting each pick from wherever the arm happened to be after the last one, it scored 1/4. Only the first pick worked. The rest timed out.

The model had no recovery behavior for the post-first-pick state. It had only seen episodes that start from the home pose with all four books still on the rack. I fixed this in Phase 3 with a trick that turned out to be embarrassingly important.

Phase 3: the dataset rebuild that peaked at 60%

Inspired by the 40-50% range, I rebuilt the dataset. 3 cameras (added an overhead view), 12 prompt templates baked in, 600 quality-scored demonstrations (the scripted controller occasionally produces partial successes, and I filtered those out). Same model architecture and recipe.

A quick look at the v3 training pipeline. The lighting, camera, and prompt variation all carry over to v3-final.

One note: this clip is actually from an earlier iteration of the v3 dataset where I’d shuffled the books at training time (random color↔slot permutation per episode). That run never broke 15%. v3-final retrained on a non-shuffled version of the same scene, which is what hit 60%. The shuffle question is what comes back to bite me two sections from now.

The v3-final run peaked at 60% on an 80-episode eval at step 22,000. The 32K and beyond checkpoints regressed slightly. 22K was the global maximum.

The clip I’m still proud of came from this checkpoint. In an interactive demo with the arm auto-resetting to its home pose between picks, the model handled all four colors back-to-back in a single scene, recovering between picks, picking the next book from a rack state (3 books left, then 2, then 1, then 0) it never saw at training:

blue OK 76 ticks "pick up the blue book"

yellow OK 99 ticks "pick up the yellow book"

green OK 67 ticks "move the green book now"

red OK 96 ticks "drop the red book in the box"

The trick was the arm-reset between picks: each prompt starts in the in-distribution start state SmolVLA was trained on. Without it, you regress to a 1/4 sequential rate. The model has no recovery behavior for the post-first-pick distribution.

Without that reset trick, I would have called this a partial failure. With it, it’s the only clip from the project I’d actually show someone.

The break: a different test, same model, half the success

So far, books had always sat in the same shelf-slot order at training AND at eval. Red was always on the far left, yellow on the far right, blue and green in between. The model saw the same color↔slot mapping every episode.

I flipped on position shuffle at eval. Random color↔slot permutation per episode. Same model, same prompts, only the visual scene changed.

60% → 17.5%. A clean 3.4× collapse.

Replaying the eval RNG (deterministic seed 42) lets me recover the per-episode slot permutation and cross-tabulate it against OK/FAIL outcomes. The result was binary:

OK FAIL

target at canonical slot: 14 12 → 53.8% (≈ in-distribution)

target at non-canonical: 0 54 → 0.0% ← never. not once.

Zero of 54 episodes succeeded when the target color was anywhere other than its training slot. The 17.5% headline decomposes exactly: 0.325 × 0.538 + 0.675 × 0.000 ≈ 0.175. The model had never used vision to find the color. It memorized “red prompt → reach for slot 0, blue prompt → slot 2, …” and used the vision tower only for arm-trajectory localization, not for object identification.

Here’s what that looks like. The prompt is “blue”, the actually-blue book has been shuffled to a different slot, and the canonical-blue slot now holds the red book. Watch where the arm goes:

It descends on the canonical-blue slot, grabs the red book that’s sitting there, drops it in the carton. Fails. Loops. Does it again.

This sharpens the Robust Skills, Brittle Grounding paper (Emukpere et al.). They reported a ~2% non-canonical “instruction-correct” floor under continuous Cartesian jitter, attributable to accidental near-canonical matches. With discrete permutations, the floor is cleanly 0%.

The failed mitigation

The literature-recommended fix for this kind of grounding collapse is counterfactual data augmentation: synthesize demonstrations where the color↔slot mapping varies, so the model can’t shortcut on slot-position.

I collected 1500 additional shuffled scripted demonstrations and retrained SmolVLA on the combined 600 canonical + 1500 shuffled dataset.

| eval condition | baseline | + aug |

|---|---|---|

| canonical | 60.0% | 8.8% |

| shuffled | 17.5% | 5.0% |

Worse on both. Lose-lose.

I spent a couple of days trying to rule out boring explanations. bf16 vs fp32 (matched the v2 recipe exactly), state schema (15-D vs 8-D, retrained from scratch), insufficient training (loss plateaued at 22K and per-checkpoint eval bounced 5-15% with no monotonic trend). None moved the number. The model didn’t break: it just got systematically worse at both distributions because the added data made the language→object pathway harder to learn, at this model size and trainable budget.

The Brittle Grounding paper’s most important practical claim that I independently corroborated: scaling demonstrations does not close this gap at the SmolVLA-LoRA budget. The architecture has a structural ceiling here.

Phase 4: a harder scene, 0/10

If the simple scene was hitting a structural ceiling, the obvious move was to either grow the model or simplify the task. I did neither. I made the task harder. Bigger rack (2 rows × 6 columns instead of 1×4), 4-cell carton instead of one carton bin, side-grasp instead of vacuum gripper, multi-cue prompts like “pick the red book in the bottom row, leftmost, and put it in the front-left cell.”

240 demos covering 10 of 12 (book, cell) pairs (one pair held out for compositional eval), with the same scripted DLS-IK controller. Standard SmolVLA recipe:

bs = 16

freeze_vision = True # frozen SigLIP

train_expert_only = True

chunk_size = 50

lr_peak = 1e-4

precision = bf16

20K steps. Training loss dropped 0.604 → 0.056. Looked textbook.

Eval: 0/10.

I tried a warm-restart from the 20K checkpoint with a fresh optimizer and scheduler, so the LR would re-ramp back to peak and (in theory) knock the policy out of whatever local basin it had settled into. Evaluated every 5K steps. All four checkpoints (10K, 15K, 20K, 25K) scored 0/5+. The decision gate triggered; I killed the run.

The diagnostic I should have run on day one: take a recorded training episode, feed its exact prompt, frames, and proprioceptive state back through the trained policy, and compare the predicted action chunk to the recorded ground-truth chunk. The model had seen this exact sequence hundreds of times.

max action error at t=0 : 0.12 rad

max action error at t=150 : 1.30 rad

The first time I saw those numbers I assumed I’d broken something in the eval harness. I re-ran the diagnostic on three different recorded episodes. Same shape every time.

At t=0 the policy is roughly correct (all 240 demos start from the same arm pose, so the average is also roughly correct). By t=150 it’s just predicting the dataset’s average trajectory, regardless of input. The per-demo signal is gone. The model couldn’t reproduce its own training data. On the training set. With the literal training inputs.

Three suspects

In rough order of how much I blame them:

1. Frozen SigLIP probably never actually learned to discriminate colors. This is the one I’d bet on.

In v1 the books always sat in the same shelf-slot order at training time. Red on the far left, yellow on the far right, blue and green in between. The simplest thing the model could learn was “red prompt → reach for slot 0, blue prompt → slot 2, …”, not “recognize red book in the camera image”. The shuffle eval that dropped v1 to 0/54 on non-canonical positions basically proved that’s what happened. The 60% on canonical eval wasn’t color discrimination, it was position memorization.

In v4 books also sit in fixed positions during training, so the same shortcut should work in principle. But v4’s prompts are denser. Multi-cue spatial descriptions, 170 unique strings versus v1’s 12 simple templates, and only ~1.4 demos per unique string. The model can’t memorize 170 different per-prompt position lookups with that little supervision. And frozen SigLIP, processing each book at roughly one 16-pixel patch, doesn’t give the action expert enough discriminative visual signal to fall back on.

About “unfreezing SigLIP”. That’s not really a knob you turn inside the SmolVLA recipe. The paper bakes it in (Section 4.3, no ablation), the LeRobot config defaults to frozen, every public fine-tune I’ve read keeps it that way. So if frozen vision is the problem, the answer isn’t “I should have flipped a switch”. It’s “I should have used a different model”. π0.5 and MolmoAct2 both qualify.

2. chunk_size=50 lets flow-matching cheat. A 50-step chunk at 33 Hz is ~1.5 s of action. The flow-matching loss is satisfied in expectation by predicting the mean trajectory across all conditional modes that share a near-identical start state. With chunk=10 the model has to commit to a specific direction much sooner; with chunk=50 the early steps of “go toward the shelf” cover most of the loss landscape and the discriminating motion gets averaged out.

3. ~1.4 demos per unique prompt. 12 books × 4 cells minus 2 known-failing combinations is 46 valid (book, cell) pairs. Multiply by 9 verb pairs (pick/grab/take × place/put/drop) and you get 414 possible prompt strings. The dataset actually contains 170 unique strings, so 240 demos / 170 unique ≈ 1.4 demos per string. Thin even if (1) and (2) weren’t true.

The obvious next step that didn’t fit on the GPU

The natural progression from SmolVLA is π0.5 (openpi). I tried it. The pilot OOM’d at 16 GB after rematerialization, needing 5.37 GB more than the 5070 Ti has. Even openpi’s smallest LoRA variant (gemma_2b_lora + gemma_300m_lora) is too large for this hardware. The training config is set up and ready; it just needs a different machine.

What I’m taking forward

Phase 3 (the simple-scene SmolVLA fine-tune with the 4-color sequential demo) was the high-water mark for this entire project. v4 wasn’t a recipe mistake; the recipe is the recipe. v4 was the wrong task for this recipe. SmolVLA + frozen vision at 240 demos was great for 4-color desk picking and ran out of room when I asked it to do 6 colors across 12 shelf positions and 4 carton cells. The deployable-target hypothesis (SmolVLA-class as the robok8s deployable for a publishing-house pick-and-pack demo) still stands; it just means I need either a model with more visual headroom or a task that fits inside SmolVLA’s headroom.

Concretely, the v5 plan is: rent an A100 or 4090 for a weekend and try π0.5 (the natural progression) or MolmoAct2 (smaller, different architecture, also has a trainable vision encoder). A different vision pipeline is where I think the grounding gap actually lives, but I haven’t tested that, and it’s worth saying out loud that I’m guessing.